Three Charts to Watch at NVIDIA's GTC: Cheaper Compute, Spend More

Last night, Huang Renxun announced the Vera Rubin platform at GTC 2026, claiming that the power consumption per inference performance is 10 times higher than Blackwell, the cost per inference Token has been reduced to one-tenth, and hinted that the merger order between Blackwell and Vera Rubin will exceed $1 trillion by 2027.

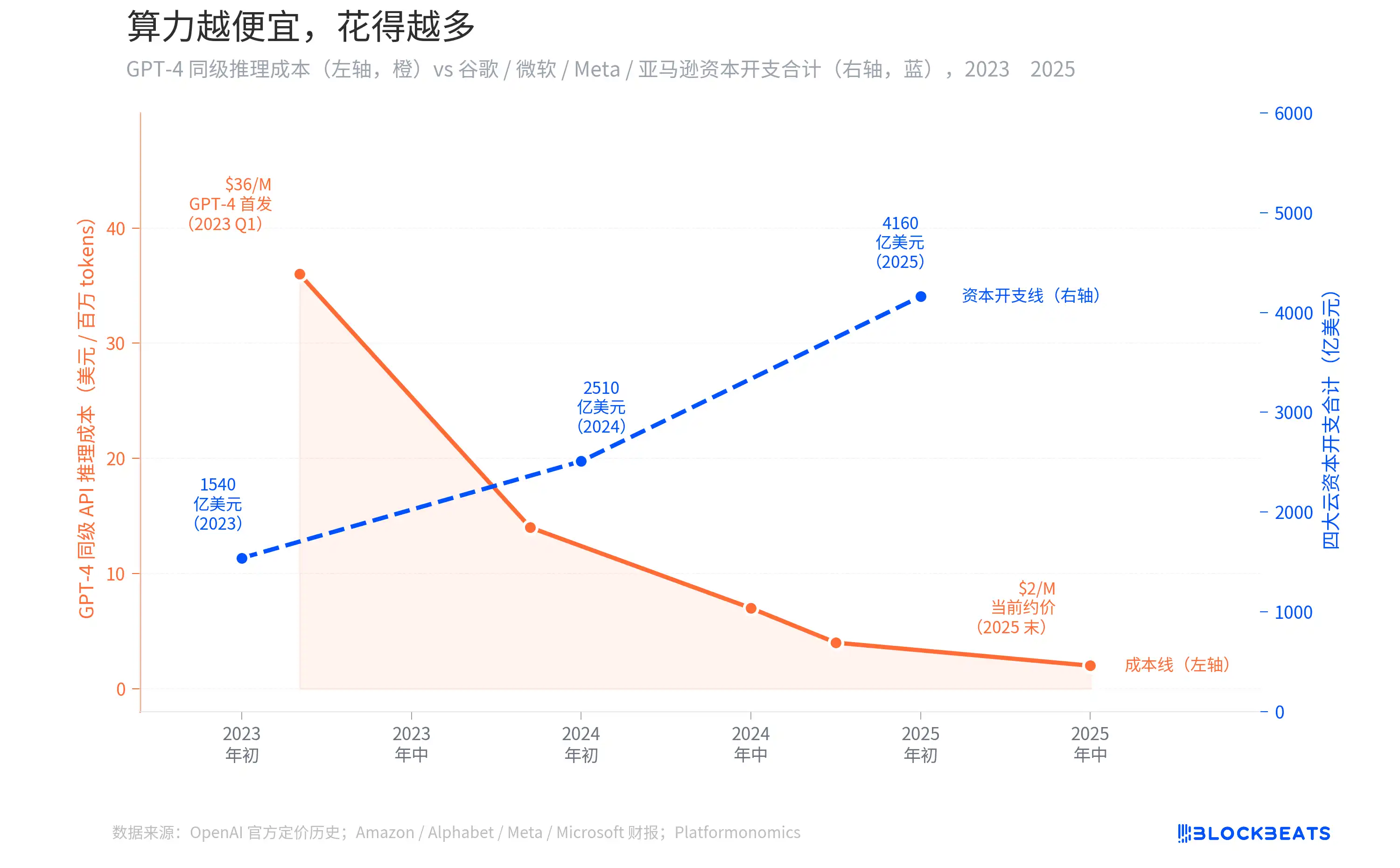

Over the past two years, the inference cost of GPT-4-level APIs has plummeted by 94%, from $36 per million Tokens to less than $2. Intuitively, with the decrease in computing costs, businesses should be spending less. However, the combined capital expenditures of the four cloud providers Amazon, Alphabet, Meta, and Microsoft have increased from $154 billion to $416 billion, nearly tripling.

Huang Renxun's trillion-dollar hint is not just a marketing slogan; it is backed by a curve that can be drawn with data.

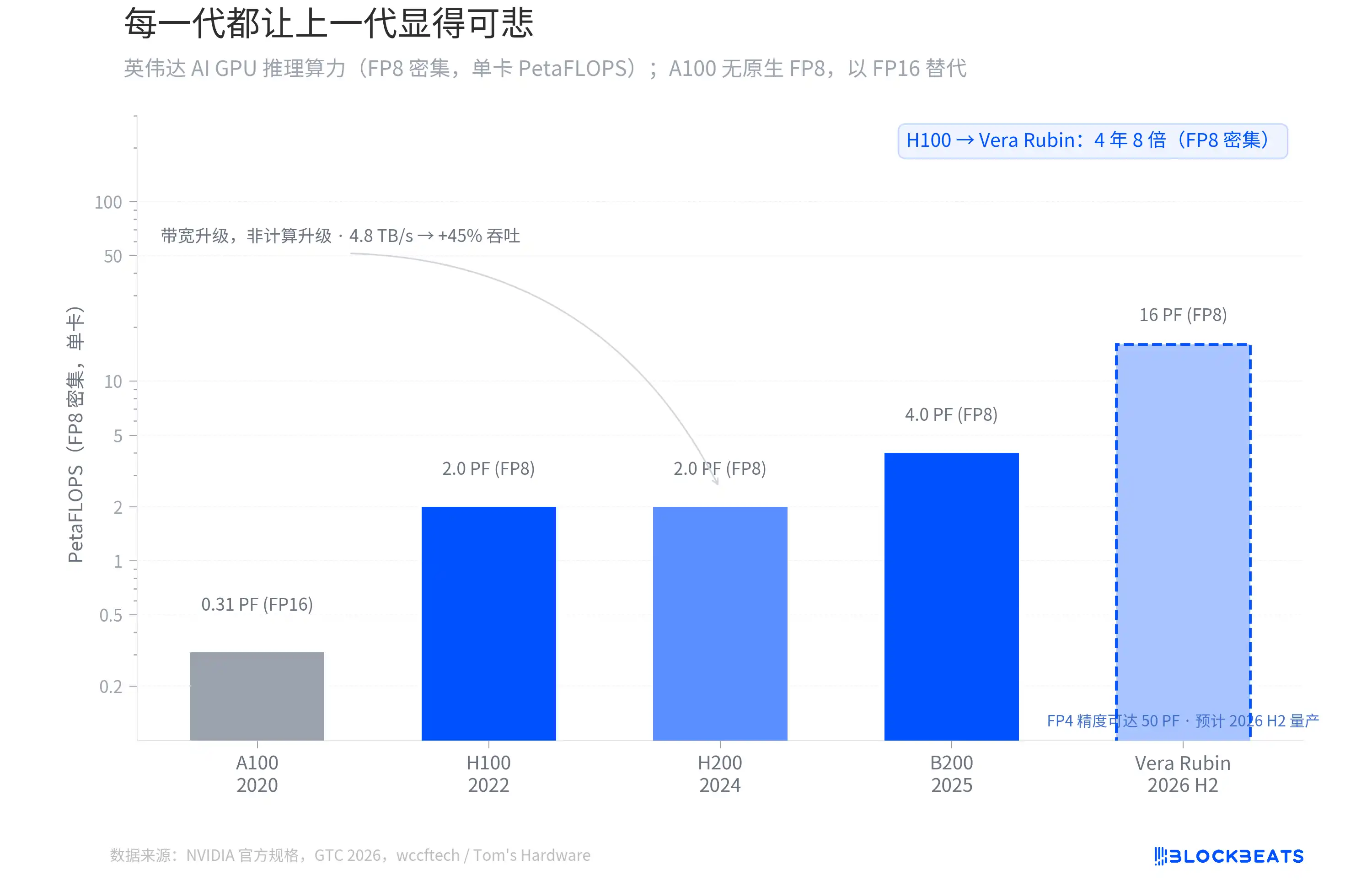

Each Generation Makes the Previous Generation Seem Pathetic

From the H100 of 2022 to the Vera Rubin set to be mass-produced in the second half of 2026, NVIDIA's AI GPU FP8 dense inference computing power has increased 8-fold in four years. According to NVIDIA's official specifications, the H100 single card has 2.0 PetaFLOPS, the B200 reaches 4.0 PF, and the Vera Rubin directly jumps to 16 PF.

However, not every generational leap comes from the same place. According to wccftech, the H200's computing cores are identical to the H100, with no change in FP8 computing power; all its upgrades come from memory bandwidth (increased from 3.35 TB/s to 4.8 TB/s), bringing about a roughly 45% inference throughput increase.

The real architectural transition occurred between B200 and Vera Rubin. Vera Rubin adopts TSMC's 3nm process, featuring a dual-chiplet design with 336B transistors, achieving 50 PF of computing power at FP4 precision. According to Tom's Hardware, the first Vera Rubin system is already running on Microsoft Azure.

There is a subtle distinction that is easy to overlook. When Huang Renxun mentioned "10 times" at GTC, he was referring to the reduction in Token cost per inference, not a multiple of the original computing power. The Token cost includes Transformer Engine optimization, FP4 precision, larger batch inference, and other system-level factors. Looking at standardized FP8 dense TFLOPS, Vera Rubin is 4 times greater than Blackwell and 8 times greater than H100.

The slope of this curve has never slowed down. Each generation of GPUs has made the previous generation look inadequate, and that is exactly the starting point of the story to be told next.

Jevons Paradox: The cheaper the computational power, the more is spent

In March 2023, when GPT-4 was just launched, the API call cost was about $36 per million Tokens. According to OpenAI's official pricing history, by the mid of 2024 with the introduction of GPT-4o, it dropped to around $7, and by the end of 2025, the actual available price had fallen below $2. A decrease of over 94% in two years.

Logically, with inference costs dropping so much, businesses should spend less. However, the reality is quite the opposite. According to various company's financial reports and data tracked by Platformonomics, the combined annual capital expenditure of the four cloud providers Amazon, Alphabet, Meta, Microsoft increased from $154 billion in 2023 to $416 billion in 2025, a growth of 170%. Google alone surged from $32 billion to $91.5 billion (about 2.9 times), with Microsoft's increase even greater.

This phenomenon has a name in economics, called the Jevons Paradox. In 1865, the British economist William Jevons found that Watt's improvements to the steam engine significantly increased the efficiency of coal use, but the coal consumption in the UK did not decrease; instead, it rose. The reason is simple: the efficiency improvement made the steam engine more cost-effective, so more industries started using steam engines, and total demand expanded far beyond the part saved by efficiency.

Today, the situation with AI inference is exactly the same. As API prices plummeted to 6% of their original, enterprises did not save budget because of it but started fitting AI into previously uneconomical scenarios. Every new scenario like customer service, code review, content generation, search reordering, ad bidding is consuming more inference power. The expansion of demand far exceeds the rate of cost decline. In early 2025, DeepSeek R1 pushed the input price to $0.55 per million Tokens, further accelerating this cycle. The two lines moving in opposite directions on the chart represent two sides of the same coin.

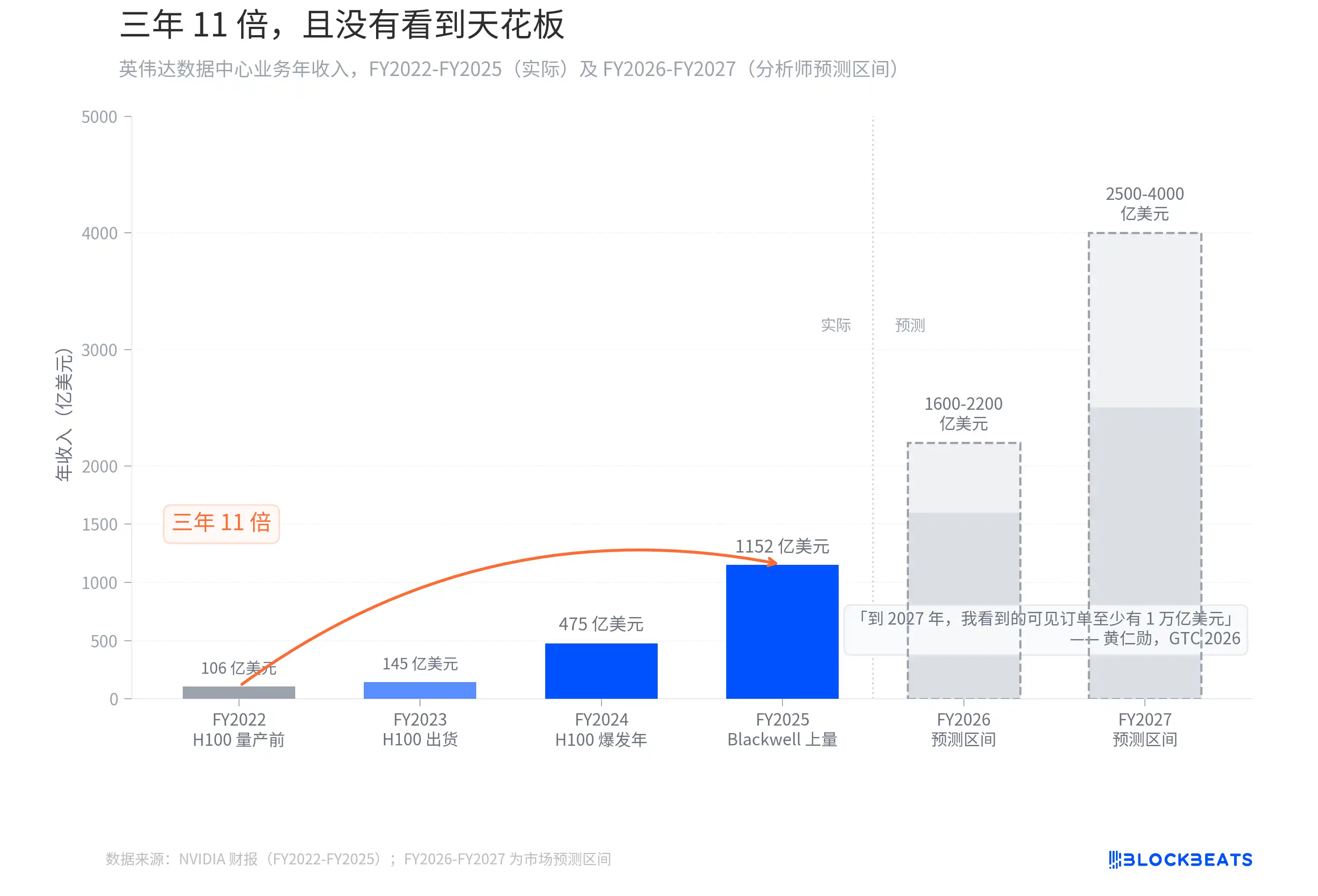

Three years, an 11-fold increase, and no sight of a ceiling

If the Jevons Paradox has a most direct beneficiary, it is the one selling shovels.

According to NVIDIA's financial report, the data center business's annual revenue increased from $10.6 billion in FY2022 (ending January 2022) to $115.2 billion in FY2025 (ending January 2025), a growth of 10.9x over three fiscal years. This growth curve has almost no precedent in tech history. For comparison, after the iPhone was launched in 2007, it took Apple about 6 years to achieve a similar order of magnitude revenue scale increase.

Then, Jensen Huang said at GTC 2026, "By 2027, the visible orders that I see are at least $1 trillion. In fact, our capacity will not be enough. I am confident that the computing demand will far exceed this number."

His forecast last year at GTC was around $500 billion in visible orders by 2026. A year later, the number doubled, with the time window extended by just one year. Analysts' revenue forecasts for FY2026-FY2027 range between $160-220 billion and $250-400 billion, respectively. However, Huang himself stated that this number is not a ceiling, "the computing demand will far exceed this number." On the day GTC ended, NVIDIA's stock price rose by 4.3%. The market evidently chose to believe him.

Each generation of GPU makes the previous look pitiful, and each round of price cuts makes the next round of capital expenditure seem natural. NVIDIA is currently situated in the sweetest spot of this paradox.

You may also like

LALIGA Match Report: Araujo seals 1–0 win as Barça tighten grip on top spot

In the early hours of March 22 (Beijing Time), Barça edged Rayo Vallecano 1–0 at Camp Nou in a key Round 29 clash. The hard-earned win lifts Barça to 73 points, strengthening their hold on first place.

Barça controlled the game with 61% possession and a sharp 89% passing accuracy (460 passes). Rayo pushed back with intensity, earning 9 corners, but Barça's defense stayed solid. Yellow cards for Raphinha, Yamal, and Cubarsí highlighted the physical edge of the match. Second-half subs like Rashford and Olmo added fresh energy to help see out the result. Rayo remain 14th on 32 points.

WEEX Insights: As the Official LALIGA Partner in HK & TW, WEEX sees Barça’s 89% passing accuracy as a clear example of high execution with minimal error. Staying precise under pressure and finding the breakthrough reflects the same disciplined approach used in rational trading.

LALIGA interactive campaigns are coming soon—stay tuned with WEEX ⚽️

About WEEX

Founded in 2018, WEEX has developed into a global crypto exchange with over 6.2 million users across more than 150 countries. The platform emphasizes security, liquidity, and usability, providing over 1,200 spot trading pairs and offering up to 400x leverage in crypto futures trading. In addition to the traditional spot and derivatives markets, WEEX is expanding rapidly in the AI era — delivering real-time AI news, empowering users with AI trading tools, and exploring innovative trade-to-earn models that make intelligent trading more accessible to everyone. Its 1,000 BTC Protection Fund further strengthens asset safety and transparency, while features such as copy trading and advanced trading tools allow users to follow professional traders and experience a more efficient, intelligent trading journey.

Follow WEEX on social media

X: @WEEX_Official

Instagram: @WEEX Exchange

Tiktok: @weex_global

Youtube: @WEEX_Official

Discord: WEEX Community

Telegram: WeexGlobalGroup

These days, even hackers are losing money

Arm Chips In-House: Rewire News Brief

IOSG: Stablecoin Reshaping Asia Cross-Border Payments? Strategic Landscape and Investment Opportunities Analysis

\$73 Billion OpenAI Aims for IPO: Drops Sora, Snubs Disney, Puts Microsoft in Risk Factors

The Chip Industry's Most Secure Middleman Just Took a Very Risky Turn

CZ's Latest Interview: My Experience is Replicable, Writing a Book to Inspire Young Entrepreneurs

Morning News | Invesco acquires a $900 million on-chain fund from Superstate; ParaFi has raised $125 million for its new fund; Solana Foundation launches developer platform SDP

What is the background of this new fund that the two major prediction market platforms have rarely joined forces to create?

SIREN, another leveraged scam

Token has become extremely popular, and the blockchain is very sad

Tether's major shareholder invests £12 million to support the "British version of Trump" in the cryptocurrency sector

Huang Renxun's Latest Podcast: Will NVIDIA Reach $1 Trillion? Will the Number of Programmers Increase Instead of Decrease? How to Deal with AI Anxiety?

Besides Resolv Hack, This DeFi Vulnerability Type Has Occurred Four Times

Trump Cries Peace, $1.5 Billion Dash | Rewire News Evening Brief

From x402 to MPP: Cloudflare's crucial vote, will it go to Coinbase or Stripe?

BlackRock CEO issues annual open letter: The wave of tokenization has arrived, and we will lead this trend

When Backpack backstabs the community

LALIGA Match Report: Araujo seals 1–0 win as Barça tighten grip on top spot

In the early hours of March 22 (Beijing Time), Barça edged Rayo Vallecano 1–0 at Camp Nou in a key Round 29 clash. The hard-earned win lifts Barça to 73 points, strengthening their hold on first place.

Barça controlled the game with 61% possession and a sharp 89% passing accuracy (460 passes). Rayo pushed back with intensity, earning 9 corners, but Barça's defense stayed solid. Yellow cards for Raphinha, Yamal, and Cubarsí highlighted the physical edge of the match. Second-half subs like Rashford and Olmo added fresh energy to help see out the result. Rayo remain 14th on 32 points.

WEEX Insights: As the Official LALIGA Partner in HK & TW, WEEX sees Barça’s 89% passing accuracy as a clear example of high execution with minimal error. Staying precise under pressure and finding the breakthrough reflects the same disciplined approach used in rational trading.

LALIGA interactive campaigns are coming soon—stay tuned with WEEX ⚽️

About WEEX

Founded in 2018, WEEX has developed into a global crypto exchange with over 6.2 million users across more than 150 countries. The platform emphasizes security, liquidity, and usability, providing over 1,200 spot trading pairs and offering up to 400x leverage in crypto futures trading. In addition to the traditional spot and derivatives markets, WEEX is expanding rapidly in the AI era — delivering real-time AI news, empowering users with AI trading tools, and exploring innovative trade-to-earn models that make intelligent trading more accessible to everyone. Its 1,000 BTC Protection Fund further strengthens asset safety and transparency, while features such as copy trading and advanced trading tools allow users to follow professional traders and experience a more efficient, intelligent trading journey.

Follow WEEX on social media

X: @WEEX_Official

Instagram: @WEEX Exchange

Tiktok: @weex_global

Youtube: @WEEX_Official

Discord: WEEX Community

Telegram: WeexGlobalGroup